Back in the old days (say, 2020), only major players like the Russian troll farm in St. Petersburg could run a big disinformation campaign. Those days are over. Now it doesn't take a big team or lots of money to make deepfakes—videos that are phony but look very real. People with modest computer skills can do it using new artificial intelligence software. Just as social media gave every Tom, Dick, and Harry a worldwide platform for hate speech, new AI technology gives at least thousands (and soon millions) of people the ability to make deeply false political ads.

Fake photos are easy:

Videos like these are a bit harder:

But by 2024, motivated high-school kids will probably be able to flood the Internet with passable fakes. Many people are not aware how good the technology already is and millions will believe the fake ads, especially when Tucker Carlson shows them on his program and says whatever the Fox News' lawyers let him say, like: "Look at these and judge for yourself." Even in the best case, where people are aware of the fact that fake photos and fake videos are everywhere, many people may simply conclude: "There is no reality anymore."

It is not only presidential races that are threatened with deepfakes. They can be made against any candidate at any level. While a deepfake used in a presidential race will get plenty of attention from the national media, a deepfake in a race for state representative probably won't. If it works, it won't be long before every campaign has a team of people whose job it is to make outrageous fake photos and videos about the opposing candidate. Fake audio recordings that purport to catch a candidate on an "open mic" could also become common.

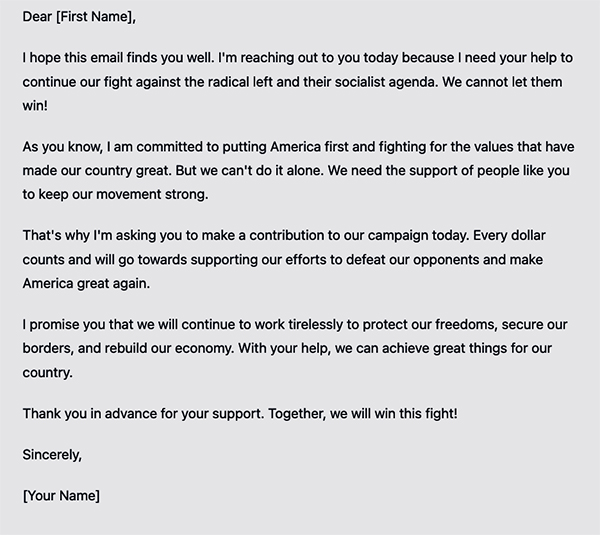

And simple stuff is already happening. ChatGPT can already write plausible e-mail fundraising ads like this computer-generated one:

The next step is to combine this technology with the database both parties have that include tens of millions of voters. This database contains a tremendous amount of information about everyone, from how they are registered, to what kind of car they have, what organizations they belong to, what magazines they subscribe to, and much, much more. Now imagine the AI program being told to write an ad aimed at one specific person purporting to be a captured e-mail from the other side designed to enrage that person. For example, imagine Republican NRA members getting a "copy" of a (fake) e-mail Joe Biden supposed sent to his supporters in which he promises to have the FBI go out and confiscate all AR-15s as soon as his new term begins. The ad could be written by the software in a convincing way. Or an ad sent to Democrats who have donated to the ACLU purportedly from the Trump campaign promising to suspend the Constitution and declare martial law on Inauguration Day.

Given enough servers, everybody on the mailing list could get a different, personalized, plausible-sounding (but fake) e-mail that is precisely tuned to get the recipient into a blue funk. That is not done yet because writing millions of highly tailored personal e-mails requires too many campaign interns. But if a computer can churn out thousands of these personalized e-mails per hour—and they look realistic—then do you really think they will say: "That would be unethical so we won't do it?" Do you think the genie is going back into the bottle any time soon? We're not so sure. (V)